Your Codebase Is the Agent's Operating Environment

I had a project split across two repos: a frontend and a backend. Shipping a feature looked the same every time. Build the backend endpoint. Map the request and response types into the frontend by hand. Start the frontend work. Find a field I missed. Go back to the backend. Repeat.

It was already a slog manually. When the project went agentic it got worse. Every feature meant either re-explaining the backend to the agent, pasting whatever changed today into the prompt as it happened, or telling it to clone-and-read the other repo and hoping the context window survived.

The fix was structural, not prompt-engineering. I collapsed both repos into a single monorepo with a shared-types package. Endpoints became typed contracts the agent could read instead of remember. The shape of the codebase did the work the prompt scaffolding had been doing badly.

The headline benchmark numbers say AI agents are senior engineers. Frontier models score 80-90%+ on SWE-Bench Verified (SWE-bench leaderboard, 2026). On SWE-EVO they collapse to 21% (arXiv 2512.18470, 2025). The variable between those two numbers is not the model. It is the shape of the codebase. This post is the observational case for treating code shape as a runtime input to your agents, with the 2026 numbers and the gotchas that show up when you do.

Key Takeaways

- Frontier agents score 80-90% on SWE-Bench Verified but drop to 21% on SWE-EVO and below 45% on SWE-Bench Pro. The variable is the shape of the codebase, not the size of the model (SWE-Bench Pro, 2025).

- Aider’s tree-sitter repo map identifies the correct file to edit on 70.3% of SWE-Bench Lite tasks with no embeddings at all (Aider, 2024).

- Vercel made Turborepo’s task graph 96% faster on a 1,000-package monorepo in 8 days using their own agents (Vercel, Mar 2026).

- The same things that made code legible to humans (small files, strong types, explicit imports, clean module boundaries) are exactly what your agent’s index, graph, and retriever depend on.

The agent doesn’t read your code, it queries it

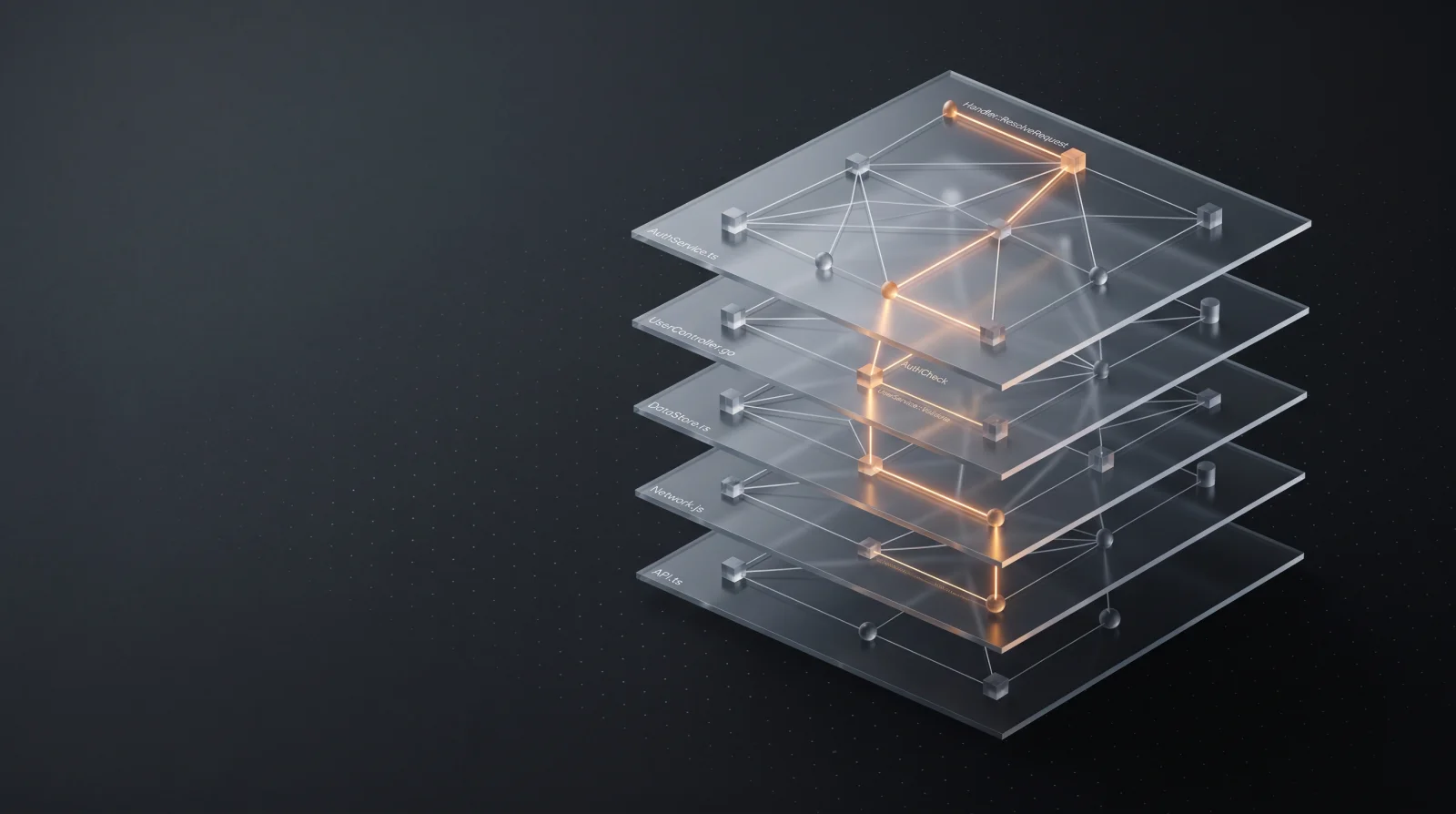

Agents do not load your repo into a context window. They issue queries against indexes, graphs, and retrievers, and the schema for those queries is whatever your codebase produces.

Coding agents today fall into three rough families.

Embeddings only. Cursor is the canonical example and one of the most-used coding agents in the market. Files are chunked on-device along function and class boundaries, embedded, and stored in a remote vector DB. At query time the prompt is embedded and a k-nearest-neighbours search returns the matching chunks (Cursor docs, 2025). Cursor reports semantic search lifts user request satisfaction by 12.5% on average (Cursor blog, 2025). The catch: chunking assumes function- and class-shaped code. Giant files chunk badly and retrieve worse.

Symbol graph. Aider builds a tree-sitter AST for every file, runs PageRank over the symbol graph, and stuffs the highest-ranked signatures into the prompt as a “repo map” (Aider, 2023). Sourcegraph Cody dropped embeddings in favour of BM25 plus a Repo-level Semantic Graph layered on top of SCIP indexes (Sourcegraph, 2024). Augment Code’s Context Engine pre-indexes call hierarchies and dependency graphs across hundreds of thousands of files (Augment, 2025). Different products, same bet: structure beats similarity at scale.

Live LSP, or no index at all. Claude Code shipped native LSP support in version 2.0.74 (December 2025), exposing goToDefinition, findReferences, and friends across eleven languages (Claude Code plugins, 2025). Cline refuses to index entirely and uses ripgrep plus on-demand tree-sitter extraction inside its plan-and-act loop. Zed published the Agent Client Protocol in August 2025 as “the LSP for AI coding agents”; by March 2026 more than 25 agents support ACP, including Claude Code, Codex, SWE-Agent, Aider, and Gemini CLI.

The layer between your code and your model is a stack: parsers, indexes, graphs, protocols. Code that parses cleanly, indexes precisely, and exposes typed signatures gets a higher-resolution view at every layer. Code that hides behaviour at runtime starves all of them. What local code intelligence actually does is the long version of that point.

| Agent | Retrieval mechanism |

|---|---|

| Cursor | Chunked embeddings, remote vector DB |

| Aider | Tree-sitter AST plus PageRank repo map |

| Cline | Ripgrep plus on-demand tree-sitter, no index |

| Claude Code | Native LSP (since 2.0.74) plus MCP tools |

The benchmarks lie quietly

The gap between SWE-Bench Verified and the harder benchmarks is the whole story. The headline number is about the test bed, not the model.

Frontier models are saturating SWE-Bench Verified. Claude Mythos Preview at 93.9%, Claude Opus 4.7 at 87.6%, GPT-5.3 Codex at 85%; the average across 83 evaluated models is 63.4% (SWE-bench leaderboard, 2026). If you stop reading at the leaderboard, agents are senior engineers.

Then you change the test bed and the floor falls out.

SWE-Bench Pro draws 1,865 problems from 41 actively maintained repos: B2B systems, business applications, dev tools. Reference solutions average 107 lines of code across four files. The same frontier models that score above 80% on Verified stay below 45% Pass@1 here (SWE-Bench Pro paper, 2025).

SWE-EVO is harder still. It tests evolution: long-horizon work, ambiguous specs, mixed-version codebases. GPT-5 resolves 21% of evolution tasks against 65% on Verified (arXiv 2512.18470, 2025). Stronger models miss instruction nuance; weaker models break on syntax and tool use. The capability gap is not raw reasoning. It is the shape of the task.

Columbia’s DAPLab put the same observation more bluntly in January 2026: “agents make increasingly more failures as the number of files in the codebase grows” (DAPLab, 2026).

OpenAI stopped reporting Verified scores in early 2026 because the benchmark is contaminated: every frontier model could reproduce verbatim gold patches for some tasks, and 59% of unsolved Verified problems have flawed test cases (SWE-Bench Pro paper, 2025).

Verified is mature, well-typed, well-tested Django and scikit-learn. Pro and EVO are messier, larger, less Django-shaped. The drop tells you how much of the headline score belongs to the model and how much belongs to a carefully-shaped test bed. The interesting question is not which model is best. It is what kind of repo the model was best on.

Monorepos are a substrate, not a religion

At the product level, the monorepo is now the highest-leverage agent-ergonomics decision you can make.

The cleanest evidence comes from Vercel’s own dogfood. In March 2026 the Turborepo team used agents and Vercel Sandboxes to optimise their task-graph computation against a 1,000-package monorepo. They shipped 81-91% speedups across repo sizes, peaking at 96% on the largest, and the whole sprint took 8 days versus an estimated 2 months without agents (Vercel, Mar 2026). That work was only feasible because every package, the build graph, and the test runner sat in one place.

Three things make a monorepo good for agents.

Atomic cross-cutting changes. A schema edit lands in one commit alongside its API handler and the frontend form that consumes it. One CI run proves the slice. The polyrepo equivalent is N coordinated PRs, one of which always merges late and breaks the others.

Shared types as ground truth. tRPC’s defining property is end-to-end type safety with zero codegen (tRPC, 2026): the router type is exported from one workspace package and consumed by every client. An agent editing a backend handler immediately sees frontend type errors in the same workspace. No second repo to clone, no generated client to regenerate, no contract to remember separately.

Build-graph filtering. nx affected and turbo run --filter=[origin/main] give the agent a fast, scoped feedback loop instead of “run the entire test suite”. The difference between a 30-second confirmation and a 12-minute wait shows up directly in how many iterations the agent gets per session.

The tooling has caught up. Turborepo 2.8 ships an Agent Skill, worktree cache sharing for parallel agents, and AI-friendly Markdown docs that respond in plain prose to preserve context windows (Turborepo, 2026). Nx’s 2026 roadmap is explicit: “the year Nx becomes infrastructure for autonomous AI agents”, with a Claude Code plugin, an MCP server exposing the project graph, and six on-demand Agent Skills (Nx, 2026).

None of this is a vendor pitch. The point is not Turborepo or Nx specifically; it is that cross-cutting type-safe contracts are a context surface in their own right. A flat polyrepo gives the agent fragments. A monorepo gives it a graph.

Code-graph tooling is the substrate

The clearest empirical case for structural retrieval is Aider’s. The tree-sitter repo map identifies the correct file to edit on 70.3% of SWE-Bench Lite tasks, and ships an end-to-end resolve rate of 26.3% with no RAG and no vector database (Aider, 2024). PageRank over a symbol graph, top-N signatures into the prompt under a token budget, and the right file shows up two-thirds of the time.

CodexGraph pushes the same idea further. The NAACL 2025 paper converts a repo into a graph database and lets the LLM write graph queries instead of doing similarity search; it reports 87.1% on SWE-Bench Verified (arXiv 2408.03910, 2025). The authors are explicit that similarity retrieval has “low recall on complex tasks” and that structural retrieval generalises better.

Sourcegraph’s own CodeScaleBench gives the cleanest before-and-after. Without retrieval, agents recovered 12.7% of the relevant files in multi-repo tasks. With Sourcegraph’s MCP retrieval, file recall climbed to 27.7% and Precision@5 went from 0.140 to 0.478 (Sourcegraph, 2025). Different methodology, same direction.

Cursor is the loudest counter-example. Pure chunked embeddings, no symbol graph, and one of the most-used coding agents in the market. Vector retrieval is the floor, not the ceiling. The point is not “embeddings are dead”. The point is that the most defensible numbers come from agents that read code as code.

The protocol layer is where 2025-2026 actually went. SCIP moved from a Sourcegraph-owned format to an independent open-governance project with Uber and Meta on the steering committee (Sourcegraph, 2025). Anthropic donated MCP to the Linux Foundation in December 2025. Zed’s Agent Client Protocol crossed 25 supporting agents by March 2026. Code shape now matters because more layers read it directly. The agent that runs on top of structure-aware search lives or dies by what your codebase exposes.

The gotchas I have hit

Six failure modes. All observed in practice, all named for shorthand.

Long files. Cline’s auto-compact prunes older file reads to keep context fresh (Cline, 2025). Cursor’s chunker assumes function- and class-shaped boundaries. Anthropic’s context engineering guidance warns that recall degrades monotonically with context length (Anthropic, 2025). A 2,000-line file degrades chunking, retrieval, and attention at once. Split.

Generated code committed to the repo. GraphQL types, Prisma outputs, OpenAPI SDKs, protobuf bindings. The agent edits the artefact, the next codegen run overwrites the work. Gitignore the output, write an AGENTS.md rule pointing at the source schema, regenerate on demand.

Default-full-test-suite. Agents run everything unless taught the affected slice. nx affected and turbo run --filter=[origin/main] exist for this reason; both Nx and Turborepo ship Agent Skills that wire the commands into the loop (Nx, 2026). Faster cycles equal more turns before the agent gets stuck.

Multiple-agent-rules-files drift. Mercari ran Cursor, Claude Code, Copilot, and Codex CLI in parallel and ended up with four divergent rules files that contradicted each other (Mercari Engineering, Oct 2025). One canonical AGENTS.md, audited in CI. If you support more than one agent, pick one source of truth.

Magic at runtime. Decorators that monkey-patch, dependency injection keyed by string, plugin auto-discovery, runtime metaclass tricks. The agent cannot statically resolve where behaviour comes from. The codebase becomes opaque at the layer the agent operates on, and the agent compensates by guessing. It guesses wrong.

DRY hurts agents more than humans. The contrarian one. Indirection costs the agent a context hop every time it follows a wrapper to its implementation. An abstraction a human reads once and internalises is, for an agent, three retrievals across three files just to confirm what the call does. Three lines of duplication often outperform a perfectly factored helper. Repeat yourself when the alternative is hiding the work.

The pattern across all six is the same: codebases that hide structure at runtime force the agent to reconstruct it at inference time, and the scaffolding around the model only goes so far. Make the structure legible.

Strong typing is a verifier loop

Typed languages give agents a tight, deterministic feedback loop. The borrow checker, the TypeScript compiler, go vet, mypy --strict. Each rejects a class of plausible-but-wrong edit before it ships, and “rejected” is a signal the agent can hill-climb against.

The Aider Polyglot leaderboard breaks results down by language. Rust and Go sit consistently at the top of the per-language pass rates; JavaScript and C++ sit lower (Aider leaderboard, 2026). The variable is not the prestige of the language. It is whether the toolchain produces a deterministic green/red signal in seconds.

A 2026 paper on automated Rust issue resolution makes the mechanism explicit: the borrow checker rejects whole categories of plausible-looking agent edits before they ever land in CI (arXiv 2602.22764, 2026). The agent that would have happily pasted a use-after-move into a Python codebase gets stopped at compile time and asked to try again.

This compounds in a typed monorepo. A backend change surfaces compile errors in the frontend before the agent finishes writing the change. Without strict types, the same change passes silently and the agent declares victory while production breaks.

This is not “TypeScript good, Python bad”. It is “pick a language with a deterministic verifier and uniform tooling, then stay strict”. Cargo, tsc --strict, mypy --strict, go vet. The strictness is the verifier loop. Lose the strictness and you lose the loop.

The principle, stated plainly

Agent-friendly code is mostly just legible code with good feedback loops. The novelty is not new principles. It is that the cost of ignoring the old ones is now metered per token, per second, per wrong-file edit.

Two heuristics earn the closing slot.

If the agent had to read three files to make this change, the change should have been one file. Indirection is a tax. Rebuild for locality.

If the agent ran the full test suite for a one-line edit, the build graph is invisible to it. Teach it the affected slice.

Everything else is a corollary. Smaller files, stronger types, explicit imports, clean module boundaries, generated code out of the way, runtime magic banished, contracts shared across packages instead of remembered. The team member metaphor still applies, and a team member is only as good as the workshop you give them.

The model is not your bottleneck. The codebase is.

If it was useful, pass it along.